如果你所用的framework支持真机和模拟器多种CPU架构,而你需要的是其中的一种或几种,那么可以可以从framework中分离出各种架构,然后合并你需要的,具体的方式举例如下:

【ios】 framework分离与合并多种CPU架构

12 Monday Dec 2016

12 Monday Dec 2016

如果你所用的framework支持真机和模拟器多种CPU架构,而你需要的是其中的一种或几种,那么可以可以从framework中分离出各种架构,然后合并你需要的,具体的方式举例如下:

18 Monday Jul 2016

Posted in 未分类

(文章来源于:鸡窝在线天使投行)

FA在投资界,其意思为财务顾问,英语Financial Advisor的 缩写,别名”新型投资银行“,其核心作用是为企业融资提供第三方的专业服务。财务顾问在企业融资和发展的过程中,起到了举足轻重的作用。像京东、聚美优品都成长过程中,基本都有专业化的FA在服务。FA绝对不是到处发小广告一样的拉皮条,真正靠谱的FA甚至要比投资经理更懂得投资。

客观来说,财务顾问在国内资本行业中的口碑一般,这个游走于投资者与企业之间的群体的天然使命应当为消除交易双方信息不对称,像有人说过“其实自己没钱,但是因为他们的专业性,认识的VC、PE和投行的人多,他们帮助你从外面找钱,从这一点来说有点像拉皮条的”。

但是,真正靠谱的FA,还是需要两把刷子的。

FA 的价值

专业的FA可以提供有针对性的服务。FA了解主流投资机构的口味与风格,可以实现最优匹配;以自身的信誉做背书,企业能够接触到投资机构决策层;引荐几家不同的投资机构,有利于交易条件谈判;同时,以FA出面来撮合交易,可以在很大程度上避免销售过度(Overshopped)的形象,有利于融资成功。

· 帮你梳理融资的故事

1)分析市场上有哪些可比项目有哪些,现在都是什么阶段了;

2)发觉、提炼项目的优势,同时用投资人的语言更好的表现出来。

3) 为项目融资规划提出专业化的建议,比如何时融资应该何时,第一轮对二轮融资多少合适,估值该定多少。

· 帮你对接合适的投资人

1) 介绍最可能理解你的机构和机构里面合适的项目经理,减少鸡同鸭讲的概率;

2)作为第三方斡旋,促进沟通。

· 帮你从头至尾协调从协议到交割的所有流程

1) 作为一名知心姐姐在你在屡战屡败的投资人哪里,舒缓你的情绪,给予信心;

2)对投资合同谈判提出专业化的建议。

啥为伪FA

对投资行业缺少基本的认识,不了解投资人的工作方式和偏好。接到项目后就满大街的散发。如果发现你的BP在各种群、论坛里流传,就要小心遇到伪FA咯。 你想想,大街上叫唤的卖奢侈品的你会信吗?

收取高额的前期费用。业界专业的FA是后期收费的, 帮你融资前不会收取费用的,因为在接单的时候,他就有很大把握可以融到资了。当然,专业的FA,对项目也会有严格的尽调,把握很小的项目不轻易接。可想而知,如果你作为FA推给投资人的都是那些所谓世界独一无二的项目,长了投资人还会看你的邮件吗?

如何鉴别真假FA

其实很简单问几个问题就了解了:

1、让他列出前50强哪些机构不会看我们的项目(前50强机构对投资阶段、投资行业还是有比较大的区分,里面有多家细分行业和阶段的)

2、从可能对我们感兴趣的机构中,随机挑选两家,让他给你分析下内部随会适合看我们的项目。然后让他给你看看他与这个机构的人是否有日常的联系和沟通。

尤其是对于首次融资的创业者们,鉴别这些不靠谱的FA的最佳方式,就是在投资人提出要对你DD之前,先跟其他投资圈里的人、他所服务过的公司DD一下你眼前的投资人。

27 Saturday Jun 2015

Posted in 未分类

Src:http://www.badrit.com/blog/2013/11/18/redis-vs-mongodb-performance#.VY2-qROqpBc

MongoDB is an open source document database, and the leading NoSQL database which is written in C++ and Redis is also an open source NoSQL database but it is key-value store rather than document database. Redis is often referred to as a data structure server since keys can contain strings, hashes, lists, sets and sorted sets.

Here’s a simple benchmark in node.js to compare the performance between Redis and MongoDB. The benchmark compares the time of writing and reading for both. For Redis I used node.js Redis client and for MongoDB I used node.js MongoDB driver

And here’s the code

In case of redis:

var redis = require("redis")

, client = redis.createClient()

, numberOfElements = 50000;

client.del({},function(err,reply){

redisWrite();

});

function redisWrite () {

console.time('redisWrite');

for (var i = 0; i < numberOfElements; i++) {

client.set(i, "some fantastic value " + i,function(err,data){

if (--i === 0) {

console.timeEnd('redisWrite');

redisRead();

}

});

};

}

function redisRead(){

client = redis.createClient();

console.time('redisRead');

for (var i = 0; i < numberOfElements; i++) {

client.get(i, function (err, reply) {

if (--i === 0) {

console.timeEnd('redisRead');

}

});

}

}

In case of MongoDB:

var MongoClient = require('mongodb').MongoClient

, numberOfElements=50000;

MongoClient.connect('mongodb://127.0.0.1:27017/test', function(err, db) {

var collection = db.collection('benchmark');

collection.ensureIndex({id:1},{} ,console.log);

collection.remove({}, function(err) { // to remove any element from the database at first

mongoWrite(collection,db);

});

})

function mongoWrite(collection,db){

console.time('mongoWrite');

for (var i = 0; i < numberOfElements; i++) {

collection.insert({id:i,value:"some fantastic value " + i}, function(err, docs) {

if(--i==0){

console.timeEnd('mongoWrite');

mongoRead(collection,db);

}

});

};

}

function mongoRead(collection,db){

console.time('mongoRead');

for (var i = 0; i < numberOfElements; i++) {

collection.findOne({id:i},function(err, results) {

if(--i==0){

console.timeEnd('mongoRead');

db.close();

}

});

}

}

Results were measured using MongoDB 2.4.8 and Redis 2.6.16

Machine Specifications

| Redis Read | Mongo Read | Redis Write | Mongo Write | |

| 10 | 2 | 5 | 5 | 8 |

| 100 | 13 | 11 | 8 | 34 |

| 1,000 | 38 | 93 | 31 | 153 |

| 10,000 | 238 | 980 | 220 | 1394 |

| 50,000 | 958 | 5218 | 979 | 8713 |

Calculated time in milliseconds (lower is better)

Form results we can see that both Mongo and Redis have almost equal time in case of small number of entries but when this number increases, Redis has remarkable superiority over mongo.

The results will vary according to your programming language and also according to the specifications of your machine.

29 Friday May 2015

Posted in 未分类

The Servlet 4.0 specification is out and Tomcat 9.0.x will support it. However, at this point Tomcat 8.0.x is the best Tomcat version and it is supporting the 3.1 Servlet Spec.

Since OS X 10.7 Java is not (pre-)installed anymore, let’s fix that first.

As I’m writing this, Java 8u45 is the latest version, available for download here: http://www.oracle.com/technetwork/java/javase/downloads/jdk8-downloads-2133151.html

The JDK installer package come in an dmg and installs easily on the Mac; and after opening the Terminal app again,

java -version

now shows something like this:

java version "1.8.0_45" Java(TM) SE Runtime Environment (build 1.8.0_45-b14) Java HotSpot(TM) 64-Bit Server VM (build 25.45-b02, mixed mode)

Whatever you do, when opening Terminal and running java -version, you should see something like this, with a version of at least 1.7.x I.e. Tomcat 8.x requires Java 7 or later.

JAVA_HOME is an important environment variable, not just for Tomcat, and it’s important to get it right. Here is a trick that allows me to keep the environment variable current, even after a Java Update was installed. In ~/.bash_profile, I set the variable like so:

export JAVA_HOME=$(/usr/libexec/java_home)

Here are the easy to follow steps to get it up and running on your Mac

sudo mkdir -p /usr/localsudo mv ~/Downloads/apache-tomcat-8.0.22 /usr/localsudo rm -f /Library/Tomcat

sudo ln -s /usr/local/apache-tomcat-8.0.22 /Library/Tomcat

sudo chown -R <your_username> /Library/Tomcatsudo chmod +x /Library/Tomcat/bin/*.shInstead of using the start and stop scripts, like so:

47 wolf:~$ /Library/Tomcat/bin/startup.sh

Using CATALINA_BASE: /Library/Tomcat

Using CATALINA_HOME: /Library/Tomcat

Using CATALINA_TMPDIR: /Library/Tomcat/temp

Using JRE_HOME: /Library/Java/JavaVirtualMachines/jdk1.8.0_45.jdk/Contents/Home

Using CLASSPATH: /Library/Tomcat/bin/bootstrap.jar:/Library/Tomcat/bin/tomcat-juli.jar

Tomcat started.

48 wolf:~$ /Library/Tomcat/bin/shutdown.sh

Using CATALINA_BASE: /Library/Tomcat

Using CATALINA_HOME: /Library/Tomcat

Using CATALINA_TMPDIR: /Library/Tomcat/temp

Using JRE_HOME: /Library/Java/JavaVirtualMachines/jdk1.8.0_45.jdk/Contents/Home

Using CLASSPATH: /Library/Tomcat/bin/bootstrap.jar:/Library/Tomcat/bin/tomcat-juli.jar

49 wolf:~$

you may also want to check out Activata’s Tomcat Controller, a tiny freeware app, providing a UI to quickly start/stop Tomcat. It may not say so, but Tomcat Controller works on OS X 10.10 just fine.

Finally, after your started Tomcat, open your Mac’s Web browser and take a look at the default page: http://localhost:8080

Reference: https://wolfpaulus.com/jounal/mac/tomcat8/

29 Friday May 2015

Posted in 未分类

This tutorial will walk you through setting up a user on your MySQL server to connect remotely.

The following items are assumed:

Contents |

You will need to know what the IP address you are connecting from. To find this you can go to one of the following sites:

Granting access to a user from a remote host is fairly simple and can be accomplished from just a few steps. First you will need to login to your MySQL server as the root user. You can do this by typing the following command:

# mysql -u root -p

This will prompt you for your MySQL root password.

Once you are logged into MySQL you need to issue the GRANT command that will enable access for your remote user. In this example we will be creating a brand new user (fooUser) that will have full access to the fooDatabase database.

Keep in mind that this statement is not complete and will need some items changed. Please change 1.2.3.4 to the IP address that we obtained above. You will also need to change my_password with the password that you would like to use for fooUser.

mysql> GRANT ALL ON fooDatabase.* TO fooUser@'1.2.3.4' IDENTIFIED BY 'my_password';

This statement will grant ALL permissions to the newly created user fooUser with a password of ‘my_password’ when they connect from the IP address 1.2.3.4.

Now you can test your connection remotely. You can access your MySQL server from another Linux server:

# mysql -u fooUser -p -h 44.55.66.77 Enter password: Welcome to the MySQL monitor. Commands end with ; or \g. Your MySQL connection id is 17 Server version: 5.0.45 Source distribution Type 'help;' or '\h' for help. Type '\c' to clear the buffer. mysql> _

Note that the IP of our MySQL server is 44.55.66.77 in this example.

There are a few things to note when setting up these remote users:

Reference:

https://www.rackspace.com/knowledge_center/article/mysql-connect-to-your-database-remotely

http://www.cyberciti.biz/tips/how-do-i-enable-remote-access-to-mysql-database-server.html

25 Wednesday Mar 2015

Posted in 未分类

This post is about automatically generating sitemaps. I chose this topic, because it is fresh in my mind as I have recently started using sitemaps for pickat.sg After some research I came to the conclusion this would be a good thing – at the time of the posting Google had 3171 URLs indexed for the website (it has been live for 3 months now), whereby after generating sitemaps there were 87,818 URLs submitted. I am curios how many will get indexed after that…

So because I didn’t want to introduce over 80k URLs manually, I had to come up with an automated solution for that. Because Pickat mobile app was developed with Java Spring, it came easy to me to selectsitemapgen4j

As I refer to the article from others, you may see different methods, please focus on the logic.

Check out the latest version here:

|

1

2

3

|

com.google.code

sitemapgen4j

1.0.1

|

The podcasts from pickat.sg have an update frequency (DAILY, WEEKLY, MONTHLY, TERMINATED, UNKNOWN) associated, so it made sense to organize sub-sitemaps to make use of the lastMod andchangeFreq properties accordingly. This way you can modify the lastMod of the daily sitemap in the sitemap index without modifying the lastMod of the monthly sitemap, and the Google bot doesn’t need to check the monthly sitemap everyday.

Method : createSitemapForPodcastsWithFrequency – generates one sitemap file

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

|

/** * Creates sitemap for podcasts/episodes with update frequency * * @param updateFrequency update frequency of the podcasts * @param sitemapsDirectoryPath the location where the sitemap will be generated */public void createSitemapForPodcastsWithFrequency( UpdateFrequencyType updateFrequency, String sitemapsDirectoryPath) throws MalformedURLException { //number of URLs counted int nrOfURLs = 0; File targetDirectory = new File(sitemapsDirectoryPath); WebSitemapGenerator wsg = WebSitemapGenerator.builder("http://www.podcastpedia.org", targetDirectory) .fileNamePrefix("sitemap_" + updateFrequency.toString()) // name of the generated sitemap .gzip(true) //recommended - as it decreases the file's size significantly .build(); //reads reachable podcasts with episodes from Database with List podcasts = readDao.getPodcastsAndEpisodeWithUpdateFrequency(updateFrequency); for(Podcast podcast : podcasts) { String url = "http://www.podcastpedia.org" + "/podcasts/" + podcast.getPodcastId() + "/" + podcast.getTitleInUrl(); WebSitemapUrl wsmUrl = new WebSitemapUrl.Options(url) .lastMod(podcast.getPublicationDate()) // date of the last published episode .priority(0.9) //high priority just below the start page which has a default priority of 1 by default .changeFreq(changeFrequencyFromUpdateFrequency(updateFrequency)) .build(); wsg.addUrl(wsmUrl); nrOfURLs++; for(Episode episode : podcast.getEpisodes() ){ url = "http://www.podcastpedia.org" + "/podcasts/" + podcast.getPodcastId() + "/" + podcast.getTitleInUrl() + "/episodes/" + episode.getEpisodeId() + "/" + episode.getTitleInUrl(); //build websitemap url wsmUrl = new WebSitemapUrl.Options(url) .lastMod(episode.getPublicationDate()) //publication date of the episode .priority(0.8) //high priority but smaller than podcast priority .changeFreq(changeFrequencyFromUpdateFrequency(UpdateFrequencyType.TERMINATED)) // .build(); wsg.addUrl(wsmUrl); nrOfURLs++; } } // One sitemap can contain a maximum of 50,000 URLs. if(nrOfURLs <= 50000){ wsg.write(); } else { // in this case multiple files will be created and sitemap_index.xml file describing the files which will be ignored // workaround to resolve the issue described at http://code.google.com/p/sitemapgen4j/issues/attachmentText?id=8&aid=80003000&name=Admit_Single_Sitemap_in_Index.patch&token=p2CFJZ5OOE5utzZV1UuxnVzFJmE%3A1375266156989 wsg.write(); wsg.writeSitemapsWithIndex(); }} |

The generated file contains URLs to podcasts and episodes, with changeFreq and lastMod set accordingly.

Snippet from the generated sitemap_MONTHLY.xml:

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

|

<?xml version="1.0" encoding="UTF-8"?> <url> <lastmod>2013-07-05T17:01+02:00</lastmod> <changefreq>monthly</changefreq> <priority>0.9</priority> </url> <url> <lastmod>2013-07-05T17:01+02:00</lastmod> <changefreq>never</changefreq> <priority>0.8</priority> </url> <url> <lastmod>2013-03-11T15:40+01:00</lastmod> <changefreq>never</changefreq> <priority>0.8</priority> </url> .....</urlset> |

After sitemaps are generated for all update frequencies, a sitemap index is generated to list all the sitemaps. This file will be submitted in the Google Webmaster Toolos.

Method : createSitemapIndexFile

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

|

/** * Creates a sitemap index from all the files from the specified directory excluding the test files and sitemap_index.xml files * * @param sitemapsDirectoryPath the location where the sitemap index will be generated */public void createSitemapIndexFile(String sitemapsDirectoryPath) throws MalformedURLException { File targetDirectory = new File(sitemapsDirectoryPath); // generate sitemap index for foo + bar grgrg File outFile = new File(sitemapsDirectoryPath + "/sitemap_index.xml"); //get all the files from the specified directory File[] files = targetDirectory.listFiles(); for(int i=0; i < files.length; i++){ boolean isNotSitemapIndexFile = !files[i].getName().startsWith("sitemap_index") || !files[i].getName().startsWith("test"); if(isNotSitemapIndexFile){ SitemapIndexUrl sitemapIndexUrl = new SitemapIndexUrl("http://www.podcastpedia.org/" + files[i].getName(), new Date(files[i].lastModified())); sig.addUrl(sitemapIndexUrl); } } sig.write();} |

The process is quite simple – the method looks in the folder where the sitemaps files were created and generates a sitemaps index with these files setting the lastmod value to the time each file had been last modified (line 18).

Et voilà sitemap_index.xml:

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

|

<?xml version="1.0" encoding="UTF-8"?> <sitemap> <lastmod>2013-08-01T07:24:38.450+02:00</lastmod> </sitemap> <sitemap> <lastmod>2013-08-01T07:25:01.347+02:00</lastmod> </sitemap> <sitemap> <lastmod>2013-08-01T07:25:10.392+02:00</lastmod> </sitemap> <sitemap> <lastmod>2013-08-01T07:26:33.067+02:00</lastmod> </sitemap> <sitemap> <lastmod>2013-08-01T07:24:53.957+02:00</lastmod> </sitemap></sitemapindex> |

If you liked this, please show your support by helping us with Podcastpedia.org

We promise to only share high quality podcasts and episodes.

With this approach, I get to schedule how often the sitemap is generated. And because generation happens outside of an HTTP request, I can afford a longer time for it to complete.

Having previous experience with the framework, Spring Batch was my obvious choice. It provides a framework for building batch jobs in Java. Spring Batch works with the idea of “chunk processing” wherein huge sets of data are divided and processed as chunks.

I then searched for a Java library for writing sitemaps and came-up with SitemapGen4j. It provides an easy to use API and is released under Apache License 2.0.

Requirements

My requirements are simple: I have a couple of static web pages which can be hard-coded to the sitemap. I also have pages for each place submitted to the web site; each place is stored as a single row in the database and is identified by a unique ID. There are also pages for each registered user; similar to the places, each user is stored as a single row and is identified by a unique ID.

A job in Spring Batch is composed of 1 or more “steps”. A step encapsulates the processing needed to be executed against a set of data.

I identified 4 steps for my job:

Step 1

Because it does not involve processing a set of data, my first step can be implemented directly as a simple Tasklet:

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

|

public class StaticPagesInitializerTasklet implements Tasklet { private static final Logger logger = LoggerFactory.getLogger(StaticPagesInitializerTasklet.class); private final String rootUrl; @Inject private WebSitemapGenerator sitemapGenerator; public StaticPagesInitializerTasklet(String rootUrl) { this.rootUrl = rootUrl; } @Override public RepeatStatus execute(StepContribution contribution, ChunkContext chunkContext) throws Exception { logger.info("Adding URL for static pages..."); sitemapGenerator.addUrl(rootUrl); sitemapGenerator.addUrl(rootUrl + "/terms"); sitemapGenerator.addUrl(rootUrl + "/privacy"); sitemapGenerator.addUrl(rootUrl + "/attribution"); logger.info("Done."); return RepeatStatus.FINISHED; } public void setSitemapGenerator(WebSitemapGenerator sitemapGenerator) { this.sitemapGenerator = sitemapGenerator; }} |

The starting point of a Tasklet is the execute() method. Here, I add the URLs of the known static pages of CheckTheCrowd.com.

Step 2

The second step requires places data to be read from the database then subsequently written to the sitemap.

This is a common requirement, and Spring Batch provides built-in Interfaces to help perform these types of processing:

The Spring Batch API includes a class called JdbcCursorItemReader, an implementation of ItemReader which continously reads rows from a JDBC ResultSet. It requires aRowMapper which is responsible for mapping database rows to batch items.

For this step, I declare a JdbcCursorItemReader in my Spring configuration and set my implementation of RowMapper:

|

1

2

3

4

5

6

7

8

|

@Beanpublic JdbcCursorItemReader<PlaceItem> placeItemReader() { JdbcCursorItemReader<PlaceItem> itemReader = new JdbcCursorItemReader<>(); itemReader.setSql(environment.getRequiredProperty(PROP_NAME_SQL_PLACES)); itemReader.setDataSource(dataSource); itemReader.setRowMapper(new PlaceItemRowMapper()); return itemReader;} |

Line 4 sets the SQL statement to query the ResultSet. In my case, the SQL statement is fetched from a properties file.

Line 5 sets the JDBC DataSource.

Line 6 sets my implementation of RowMapper.

Next, I write my implementation of ItemWriter:

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

|

public class PlaceItemWriter implements ItemWriter<PlaceItem> { private static final Logger logger = LoggerFactory.getLogger(PlaceItemWriter.class); private final String rootUrl; @Inject private WebSitemapGenerator sitemapGenerator; public PlaceItemWriter(String rootUrl) { this.rootUrl = rootUrl; } @Override public void write(List<? extends PlaceItem> items) throws Exception { String url; for (PlaceItem place : items) { url = rootUrl + "/place/" + place.getApiId() + "?searchId=" + place.getSearchId(); logger.info("Adding URL: " + url); sitemapGenerator.addUrl(url); } } public void setSitemapGenerator(WebSitemapGenerator sitemapGenerator) { this.sitemapGenerator = sitemapGenerator; }} |

Places in CheckTheCrowd.com are accessible from URLs having this pattern:checkthecrowd.com/place/{placeId}?searchId={searchId}. My ItemWritersimply iterates through the chunk of PlaceItems, builds the URL, then adds the URL to the sitemap.

Step 3

The third step is exactly the same as the previous, but this time processing is done on user profiles.

Below is my ItemReader declaration:

|

1

2

3

4

5

6

7

8

|

@Beanpublic JdbcCursorItemReader<PlaceItem> profileItemReader() { JdbcCursorItemReader<PlaceItem> itemReader = new JdbcCursorItemReader<>(); itemReader.setSql(environment.getRequiredProperty(PROP_NAME_SQL_PROFILES)); itemReader.setDataSource(dataSource); itemReader.setRowMapper(new ProfileItemRowMapper()); return itemReader;} |

Below is my ItemWriter implementation:

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

|

public class ProfileItemWriter implements ItemWriter<ProfileItem> { private static final Logger logger = LoggerFactory.getLogger(ProfileItemWriter.class); private final String rootUrl; @Inject private WebSitemapGenerator sitemapGenerator; public ProfileItemWriter(String rootUrl) { this.rootUrl = rootUrl; } @Override public void write(List<? extends ProfileItem> items) throws Exception { String url; for (ProfileItem profile : items) { url = rootUrl + "/profile/" + profile.getUsername(); logger.info("Adding URL: " + url); sitemapGenerator.addUrl(url); } } public void setSitemapGenerator(WebSitemapGenerator sitemapGenerator) { this.sitemapGenerator = sitemapGenerator; }} |

Profiles in CheckTheCrowd.com are accessed from URLs having this pattern:checkthecrowd.com/profile/{username}.

Step 4

The last step is fairly straightforward and is also implemented as a simple Tasklet:

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

|

public class XmlWriterTasklet implements Tasklet { private static final Logger logger = LoggerFactory.getLogger(XmlWriterTasklet.class); @Inject private WebSitemapGenerator sitemapGenerator; @Override public RepeatStatus execute(StepContribution contribution, ChunkContext chunkContext) throws Exception { logger.info("Writing sitemap.xml..."); sitemapGenerator.write(); logger.info("Done."); return RepeatStatus.FINISHED; }} |

Notice that I am using the same instance of WebSitemapGenerator across all the steps. It is declared in my Spring configuration as:

|

1

2

3

4

5

6

7

|

@Beanpublic WebSitemapGenerator sitemapGenerator() throws Exception { String rootUrl = environment.getRequiredProperty(PROP_NAME_ROOT_URL); String deployDirectory = environment.getRequiredProperty(PROP_NAME_DEPLOY_PATH); return WebSitemapGenerator.builder(rootUrl, new File(deployDirectory)) .allowMultipleSitemaps(true).maxUrls(1000).build();} |

Because they change between environments (dev vs prod), rootUrl anddeployDirectory are both configured from a properties file.

Wiring them all together…

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

|

<beans> <context:component-scan base-package="com.checkthecrowd.batch.sitemapgen.config" /> <bean class="...config.SitemapGenConfig" /> <bean class="...config.java.process.ConfigurationPostProcessor" /> <batch:job id="generateSitemap" job-repository="jobRepository"> <batch:step id="insertStaticPages" next="insertPlacePages"> <batch:tasklet ref="staticPagesInitializerTasklet" /> </batch:step> <batch:step id="insertPlacePages" parent="abstractParentStep" next="insertProfilePages"> <batch:tasklet> <batch:chunk reader="placeItemReader" writer="placeItemWriter" /> </batch:tasklet> </batch:step> <batch:step id="insertProfilePages" parent="abstractParentStep" next="writeXml"> <batch:tasklet> <batch:chunk reader="profileItemReader" writer="profileItemWriter" /> </batch:tasklet> </batch:step> <batch:step id="writeXml"> <batch:tasklet ref="xmlWriterTasklet" /> </batch:step> </batch:job> <batch:step id="abstractParentStep" abstract="true"> <batch:tasklet> <batch:chunk commit-interval="100" /> </batch:tasklet> </batch:step></beans> |

Lines 26-30 declare an abstract step which serves as the common parent for steps 2 and 3. It sets a property called commit-interval which defines how many items comprises a chunk. In this case, a chunk is comprised of 100 items.

There is a lot more to Spring Batch, kindly refer to the official reference guide.

25 Wednesday Mar 2015

Posted in 未分类

A question I get a lot is what the difference is between Java interfaces and abstract classes, and when to use each. Having answered this question by email multiple times, I decided to write this tutorial about Java interfaces vs abstract classes.

Java interfaces are used to decouple the interface of some component from the implementation. In other words, to make the classes using the interface independent of the classes implementing the interface. Thus, you can exchange the implementation of the interface, without having to change the class using the interface.

Abstract classes are typically used as base classes for extension by subclasses. Some programming languages use abstract classes to achieve polymorphism, and to separate interface from implementation, but in Java you use interfaces for that. Remember, a Java class can only have 1 superclass, but it can implement multiple interfaces. Thus, if a class already has a different superclass, it can implement an interface, but it cannot extend another abstract class. Therefore interfaces are a more flexible mechanism for exposing a common interface.

If you need to separate an interface from its implementation, use an interface. If you also need to provide a base class or default implementation of the interface, add an abstract class (or normal class) that implements the interface.

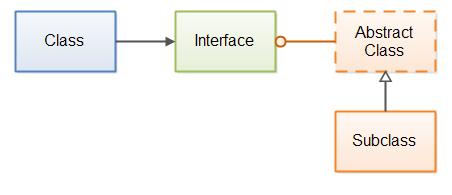

Here is an example showing a class referencing an interface, an abstract class implementing that interface, and a subclass extending the abstract class.

|

| The blue class knows only the interface. The abstract class implements the interface, and the subclass inherits from the abstract class. |

Below are the code examples from the text on Java Abstract Classes, but with an interface added which is implemented by the abstract base class. That way it resembles the diagram above.

First the interface:

public interface URLProcessor {

public void process(URL url) throws IOException;

}

Second, the abstract base class:

public abstract class URLProcessorBase implements URLProcessor {

public void process(URL url) throws IOException {

URLConnection urlConnection = url.openConnection();

InputStream input = urlConnection.getInputStream();

try{

processURLData(input);

} finally {

input.close();

}

}

protected abstract void processURLData(InputStream input)

throws IOException;

}

Third, the subclass of the abstract base class:

public class URLProcessorImpl extends URLProcessorBase {

@Override

protected void processURLData(InputStream input) throws IOException {

int data = input.read();

while(data != -1){

System.out.println((char) data);

data = input.read();

}

}

}

Fourth, how to use the interface URLProcessor as variable type, even though it is the subclassUrlProcessorImpl that is instantiated.

URLProcessor urlProcessor = new URLProcessorImpl();

urlProcessor.process(new URL("http://jenkov.com"));

Using both an interface and an abstract base class makes your code more flexible. It possible to implement simple URL processors simply by subclassing the abstract base class. If you need something more advanced, your URL processor can just implement the URLProcessor interface directly, and not inherit fromURLProcessorBase.

Src:

http://tutorials.jenkov.com/java/interfaces-vs-abstract-classes.html

10 Tuesday Feb 2015

Posted in 未分类

from: http://enable-cors.org/server_nginx.html

The following Nginx configuration enables CORS, with support for preflight requests, using a regular expression to define a whitelist of allowed origins, and various default values that may be needed to workaround incorrect browser implementations.

#

# A CORS (Cross-Origin Resouce Sharing) config for nginx

#

# == Purpose

#

# This nginx configuration enables CORS requests in the following way:

# - enables CORS just for origins on a whitelist specified by a regular expression

# - CORS preflight request (OPTIONS) are responded immediately

# - Access-Control-Allow-Credentials=true for GET and POST requests

# - Access-Control-Max-Age=20days, to minimize repetitive OPTIONS requests

# - various superluous settings to accommodate nonconformant browsers

#

# == Comment on echoing Access-Control-Allow-Origin

#

# How do you allow CORS requests only from certain domains? The last

# published W3C candidate recommendation states that the

# Access-Control-Allow-Origin header can include a list of origins.

# (See: http://www.w3.org/TR/2013/CR-cors-20130129/#access-control-allow-origin-response-header )

# However, browsers do not support this well and it likely will be

# dropped from the spec (see, http://www.rfc-editor.org/errata_search.php?rfc=6454&eid=3249 ).

#

# The usual workaround is for the server to keep a whitelist of

# acceptable origins (as a regular expression), match the request's

# Origin header against the list, and echo back the matched value.

#

# (Yes you can use '*' to accept all origins but this is too open and

# prevents using 'Access-Control-Allow-Credentials: true', which is

# needed for HTTP Basic Access authentication.)

#

# == Comment on spec

#

# Comments below are all based on my reading of the CORS spec as of

# 2013-Jan-29 ( http://www.w3.org/TR/2013/CR-cors-20130129/ ), the

# XMLHttpRequest spec (

# http://www.w3.org/TR/2012/WD-XMLHttpRequest-20121206/ ), and

# experimentation with latest versions of Firefox, Chrome, Safari at

# that point in time.

#

# == Changelog

#

# shared at: https://gist.github.com/algal/5480916

# based on: https://gist.github.com/alexjs/4165271

#

location / {

# if the request included an Origin: header with an origin on the whitelist,

# then it is some kind of CORS request.

# specifically, this example allow CORS requests from

# scheme : http or https

# authority : any authority ending in ".mckinsey.com"

# port : nothing, or :

if ($http_origin ~* (https?://[^/]*\.mckinsey\.com(:[0-9]+)?)$) {

set $cors "true";

}

# Nginx doesn't support nested If statements, so we use string

# concatenation to create a flag for compound conditions

# OPTIONS indicates a CORS pre-flight request

if ($request_method = 'OPTIONS') {

set $cors "${cors}options";

}

# non-OPTIONS indicates a normal CORS request

if ($request_method = 'GET') {

set $cors "${cors}get";

}

if ($request_method = 'POST') {

set $cors "${cors}post";

}

# if it's a GET or POST, set the standard CORS responses header

if ($cors = "trueget") {

# Tells the browser this origin may make cross-origin requests

# (Here, we echo the requesting origin, which matched the whitelist.)

add_header 'Access-Control-Allow-Origin' "$http_origin";

# Tells the browser it may show the response, when XmlHttpRequest.withCredentials=true.

add_header 'Access-Control-Allow-Credentials' 'true';

# # Tell the browser which response headers the JS can see, besides the "simple response headers"

# add_header 'Access-Control-Expose-Headers' 'myresponseheader';

}

if ($cors = "truepost") {

# Tells the browser this origin may make cross-origin requests

# (Here, we echo the requesting origin, which matched the whitelist.)

add_header 'Access-Control-Allow-Origin' "$http_origin";

# Tells the browser it may show the response, when XmlHttpRequest.withCredentials=true.

add_header 'Access-Control-Allow-Credentials' 'true';

# # Tell the browser which response headers the JS can see, besides the "simple response headers"

# add_header 'Access-Control-Expose-Headers' 'myresponseheader';

}

# if it's OPTIONS, then it's a CORS preflight request so respond immediately with no response body

if ($cors = "trueoptions") {

# Tells the browser this origin may make cross-origin requests

# (Here, we echo the requesting origin, which matched the whitelist.)

add_header 'Access-Control-Allow-Origin' "$http_origin";

# in a preflight response, tells browser the subsequent actual request can include user credentials (e.g., cookies)

add_header 'Access-Control-Allow-Credentials' 'true';

#

# Return special preflight info

#

# Tell browser to cache this pre-flight info for 20 days

add_header 'Access-Control-Max-Age' 1728000;

# Tell browser we respond to GET,POST,OPTIONS in normal CORS requests.

#

# Not officially needed but still included to help non-conforming browsers.

#

# OPTIONS should not be needed here, since the field is used

# to indicate methods allowed for "actual request" not the

# preflight request.

#

# GET,POST also should not be needed, since the "simple

# methods" GET,POST,HEAD are included by default.

#

# We should only need this header for non-simple requests

# methods (e.g., DELETE), or custom request methods (e.g., XMODIFY)

add_header 'Access-Control-Allow-Methods' 'GET, POST, OPTIONS';

# Tell browser we accept these headers in the actual request

#

# A dynamic, wide-open config would just echo back all the headers

# listed in the preflight request's

# Access-Control-Request-Headers.

#

# A dynamic, restrictive config, would just echo back the

# subset of Access-Control-Request-Headers headers which are

# allowed for this resource.

#

# This static, fairly open config just returns a hardcoded set of

# headers that covers many cases, including some headers that

# are officially unnecessary but actually needed to support

# non-conforming browsers

#

# Comment on some particular headers below:

#

# Authorization -- practically and officially needed to support

# requests using HTTP Basic Access authentication. Browser JS

# can use HTTP BA authentication with an XmlHttpRequest object

# req by calling

#

# req.withCredentials=true, and

# req.setRequestHeader('Authorization','Basic ' + window.btoa(theusername + ':' + thepassword))

#

# Counterintuitively, the username and password fields on

# XmlHttpRequest#open cannot be used to set the authorization

# field automatically for CORS requests.

#

# Content-Type -- this is a "simple header" only when it's

# value is either application/x-www-form-urlencoded,

# multipart/form-data, or text/plain; and in that case it does

# not officially need to be included. But, if your browser

# code sets the content type as application/json, for example,

# then that makes the header non-simple, and then your server

# must declare that it allows the Content-Type header.

#

# Accept,Accept-Language,Content-Language -- these are the

# "simple headers" and they are officially never

# required. Practically, possibly required.

#

# Origin -- logically, should not need to be explicitly

# required, since it's implicitly required by all of

# CORS. officially, it is unclear if it is required or

# forbidden! practically, probably required by existing

# browsers (Gecko does not request it but WebKit does, so

# WebKit might choke if it's not returned back).

#

# User-Agent,DNT -- officially, should not be required, as

# they cannot be set as "author request headers". practically,

# may be required.

#

# My Comment:

#

# The specs are contradictory, or else just confusing to me,

# in how they describe certain headers as required by CORS but

# forbidden by XmlHttpRequest. The CORS spec says the browser

# is supposed to set Access-Control-Request-Headers to include

# only "author request headers" (section 7.1.5). And then the

# server is supposed to use Access-Control-Allow-Headers to

# echo back the subset of those which is allowed, telling the

# browser that it should not continue and perform the actual

# request if it includes additional headers (section 7.1.5,

# step 8). So this implies the browser client code must take

# care to include all necessary headers as author request

# headers.

#

# However, the spec for XmlHttpRequest#setRequestHeader

# (section 4.6.2) provides a long list of headers which the

# the browser client code is forbidden to set, including for

# instance Origin, DNT (do not track), User-Agent, etc.. This

# is understandable: these are all headers that we want the

# browser itself to control, so that malicious browser client

# code cannot spoof them and for instance pretend to be from a

# different origin, etc..

#

# But if XmlHttpRequest forbids the browser client code from

# setting these (as per the XmlHttpRequest spec), then they

# are not author request headers. And if they are not author

# request headers, then the browser should not include them in

# the preflight request's Access-Control-Request-Headers. And

# if they are not included in Access-Control-Request-Headers,

# then they should not be echoed by

# Access-Control-Allow-Headers. And if they are not echoed by

# Access-Control-Allow-Headers, then the browser should not

# continue and execute actual request. So this seems to imply

# that the CORS and XmlHttpRequest specs forbid certain

# widely-used fields in CORS requests, including the Origin

# field, which they also require for CORS requests.

#

# The bottom line: it seems there are headers needed for the

# web and CORS to work, which at the moment you should

# hard-code into Access-Control-Allow-Headers, although

# official specs imply this should not be necessary.

#

add_header 'Access-Control-Allow-Headers' 'Authorization,Content-Type,Accept,Origin,User-Agent,DNT,Cache-Control,X-Mx-ReqToken,Keep-Alive,X-Requested-With,If-Modified-Since';

# build entire response to the preflight request

# no body in this response

add_header 'Content-Length' 0;

# (should not be necessary, but included for non-conforming browsers)

add_header 'Content-Type' 'text/plain charset=UTF-8';

# indicate successful return with no content

return 204;

}

# --PUT YOUR REGULAR NGINX CODE HERE--

}

06 Friday Feb 2015

Posted in 未分类

Question:

Recently forked a project and applied several fixes. I then created a pull request which was then accepted.

A few days later another change was made by another contributor. So my fork doesn’t contain that change… How can I get that change into my fork?

Do I need to delete and re-create my fork when I have further changes to contribute? or is there an update button?

Answer:

In your local clone of your forked repository, you can add the original GitHub repository as a “remote”. (“Remotes” are like nicknames for the URLs of repositories – origin is one, for example.) Then you can fetch all the branches from that upstream repository, and rebase your work to continue working on the upstream version. In terms of commands that might look like:

# Add the remote, call it "upstream":

git remote add upstream https://github.com/whoever/whatever.git

# Fetch all the branches of that remote into remote-tracking branches,

# such as upstream/master:

git fetch upstream

# Make sure that you're on your master branch:

git checkout master

# Rewrite your master branch so that any commits of yours that

# aren't already in upstream/master are replayed on top of that

# other branch:

git rebase upstream/master

If you don’t want to rewrite the history of your master branch, (for example because other people may have cloned it) then you should replace the last command with git merge upstream/master. However, for making further pull requests that are as clean as possible, it’s probably better to rebase.

Update: If you’ve rebased your branch onto upstream/master you may need to force the push in order to push it to your own forked repository on GitHub. You’d do that with:

git push -f origin master

You only need to use the -f the first time after you’ve rebased.

Reference:

http://stackoverflow.com/questions/7244321/how-to-update-github-forked-repository

06 Friday Feb 2015

Posted in 未分类

Recently broadleaf commerce, a business website open source template.

The model is run in the jetty container, the database is HSQL. The official website describes how to migrate the database to PosgreSQL and the project needed for deployment in Tomcat configuration, but the process is not very detailed, online resources in this area is not a lot, so I decided to write this blog as a summary.

Transfer 1 database (HSQL to POSGRESQL)

(a)Open the root directory of the DemoSite project pom.xml file, in the<dependencyManagement>Regional add:

<dependency>

<groupId>mysql</groupId>

<artifactId>mysql-connector-java</artifactId>

<version>5.1.26</version>

<type>jar</type>

<scope>compile</scope>

</dependency>

(b)Open and found in the pom.xml in the admin and site folders respectively<dependencies>Regional add:

<dependency>

<groupId>mysql</groupId>

<artifactId>mysql-connector-java</artifactId>

</dependency>

(c)Add a database named broadleaf in the MySQL database

(d)Open admin/src/main/webapp/META-INF and admin/src/main/webapp/META-INF in context.xml respectively, the content is replaced by the following (database configuration information such as user name and password please according to their own environment change accordingly):

<?xml version="1.0" encoding="UTF-8"?>

<Context>

<Resource name="jdbc/web"

auth="Container"

type="javax.sql.DataSource"

factory="org.apache.tomcat.jdbc.pool.DataSourceFactory"

testWhileIdle="true"

testOnBorrow="true"

testOnReturn="false"

validationQuery="SELECT 1"

timeBetweenEvictionRunsMillis="30000"

maxActive="15"

maxIdle="10"

minIdle="5"

removeAbandonedTimeout="60"

removeAbandoned="false"

logAbandoned="true"

minEvictableIdleTimeMillis="30000"

jdbcInterceptors="org.apache.tomcat.jdbc.pool.interceptor.ConnectionState;org.apache.tomcat.jdbc.pool.interceptor.StatementFinalizer"

username="root"

password="123"

driverClassName="com.postgresql.Driver"

url="jdbc:mysql://localhost:3306/broadleaf"/>

<Resource name="jdbc/storage"

auth="Container"

type="javax.sql.DataSource"

factory="org.apache.tomcat.jdbc.pool.DataSourceFactory"

testWhileIdle="true"

testOnBorrow="true"

testOnReturn="false"

validationQuery="SELECT 1"

timeBetweenEvictionRunsMillis="30000"

maxActive="15"

maxIdle="10"

minIdle="5"

removeAbandonedTimeout="60"

removeAbandoned="false"

logAbandoned="true"

minEvictableIdleTimeMillis="30000"

jdbcInterceptors="org.apache.tomcat.jdbc.pool.interceptor.ConnectionState;org.apache.tomcat.jdbc.pool.interceptor.StatementFinalizer"

username="root"

password="123"

driverClassName="com.postgresql.Driver"

url="jdbc:mysql://localhost:3306/broadleaf"/>

<Resource name="jdbc/secure"

auth="Container"

type="javax.sql.DataSource"

factory="org.apache.tomcat.jdbc.pool.DataSourceFactory"

testWhileIdle="true"

testOnBorrow="true"

testOnReturn="false"

validationQuery="SELECT 1"

timeBetweenEvictionRunsMillis="30000"

maxActive="15"

maxIdle="10"

minIdle="5"

removeAbandonedTimeout="60"

removeAbandoned="false"

logAbandoned="true"

minEvictableIdleTimeMillis="30000"

jdbcInterceptors="org.apache.tomcat.jdbc.pool.interceptor.ConnectionState;org.apache.tomcat.jdbc.pool.interceptor.StatementFinalizer"

username="root"

password="123"

driverClassName="com.postgresql.Driver"

url="jdbc:mysql://localhost:3306/broadleaf"/>

</Context>

(e)Open the core/src/main/resources/runtime-properties/common-shared.properties file, the following three

blPU.hibernate.dialect=org.hibernate.dialect.HSQLDialect blCMSStorage.hibernate.dialect=org.hibernate.dialect.HSQLDialect blSecurePU.hibernate.dialect=org.hibernate.dialect.HSQLDialect

Were replaced by:

blPU.hibernate.dialect=org.hibernate.dialect.PostgreSQLDialect blSecurePU.hibernate.dialect=org.hibernate.dialect.PostgreSQLDialect blCMSStorage.hibernate.dialect=org.hibernate.dialect.org.hibernate.dialect.PostgreSQLDialect

(f)Open the DemoSite build.properties in the root directory, the following contents

ant.hibernate.sql.ddl.dialect=org.hibernate.dialect.HSQLDialect ant.blPU.url=jdbc:hsqldb:hsql://localhost/broadleaf ant.blPU.userName=sa ant.blPU.password=null ant.blPU.driverClassName=org.hsqldb.jdbcDriver ant.blSecurePU.url=jdbc:hsqldb:hsql://localhost/broadleaf ant.blSecurePU.userName=sa ant.blSecurePU.password=null ant.blSecurePU.driverClassName=org.hsqldb.jdbcDriver ant.blCMSStorage.url=jdbc:hsqldb:hsql://localhost/broadleaf ant.blCMSStorage.userName=sa ant.blCMSStorage.password=null ant.blCMSStorage.driverClassName=org.hsqldb.jdbcDriver

According to their configuration changes to database:

ant.hibernate.sql.ddl.dialect=org.hibernate.dialect.PostgreSQLDialect ant.blPU.url=jdbc:postgresql://localhost:3306/broadleaf ant.blPU.userName=root ant.blPU.password=123 ant.blPU.driverClassName=org.postgresql.Driver ant.blSecurePU.url=jdbc:postgresql://localhost:3306/broadleaf ant.blSecurePU.userName=root ant.blSecurePU.password=123 ant.blSecurePU.driverClassName=org.postgresql.Driver ant.blCMSStorage.url=jdbc:postgresql://localhost:3306/broadleaf ant.blCMSStorage.userName=root ant.blCMSStorage.password=123 ant.blCMSStorage.driverClassName=org.postgresql.Driver

This database migration is complete.

Transfer 2 servers (from jetty to tomcat7)

(a)In the site and admin directory of the pom.xml file<plugins>Adding region:

<plugin>

<groupId>org.apache.tomcat.maven</groupId>

<artifactId>tomcat7-maven-plugin</artifactId>

<version>2.0</version>

<configuration>

<warSourceDirectory>${webappDirectory}</warSourceDirectory>

<path>/</path>

<port>${httpPort}</port>

<httpsPort>${httpsPort}</httpsPort>

<keystoreFile>${webappDirectory}/WEB-INF/blc-example.keystore</keystoreFile>

<keystorePass>broadleaf</keystorePass>

<password>broadleaf</password>

</configuration>

</plugin>

(b)Right click DemoSite project in eclipse, Has run the Run As inside the Maven clean and Maven install, After the success will be in DemoSite admin and site target folder in the corresponding war packet generation, We generated two war package named admin.war and zk.war.

(c)Your environment is Ubuntu, the path to the webapps Tomcat server for /var/lib/tomcat7/webapps, admin and zk.war will be copied to the folder, and then restart the Tomcat server:

sudo /etc/init.d/tomcat7 restart

See the /var/log/tomcat7/catalina.out file error:

Caused by: java.lang.OutOfMemoryError: Java heap space

at org.apache.tomcat.util.bcel.classfile.ClassParser.readMethods(ClassParser.java:268)

at org.apache.tomcat.util.bcel.classfile.ClassParser.parse(ClassParser.java:128)

at org.apache.catalina.startup.ContextConfig.processAnnotationsStream(ContextConfig.java:2105)

at org.apache.catalina.startup.ContextConfig.processAnnotationsJar(ContextConfig.java:1981)

at org.apache.catalina.startup.ContextConfig.processAnnotationsUrl(ContextConfig.java:1947)

at org.apache.catalina.startup.ContextConfig.processAnnotations(ContextConfig.java:1932)

at org.apache.catalina.startup.ContextConfig.webConfig(ContextConfig.java:1326)

at org.apache.catalina.startup.ContextConfig.configureStart(ContextConfig.java:878)

at org.apache.catalina.startup.ContextConfig.lifecycleEvent(ContextConfig.java:369)

at org.apache.catalina.util.LifecycleSupport.fireLifecycleEvent(LifecycleSupport.java:119)

at org.apache.catalina.util.LifecycleBase.fireLifecycleEvent(LifecycleBase.java:90)

at org.apache.catalina.core.StandardContext.startInternal(StandardContext.java:5179)

at org.apache.catalina.util.LifecycleBase.start(LifecycleBase.java:150)

at org.apache.catalina.core.ContainerBase.addChildInternal(ContainerBase.java:901)

at org.apache.catalina.core.ContainerBase.addChild(ContainerBase.java:877)

at org.apache.catalina.core.StandardHost.addChild(StandardHost.java:633)

at org.apache.catalina.startup.HostConfig.deployDirectory(HostConfig.java:1114)

at org.apache.catalina.startup.HostConfig$DeployDirectory.run(HostConfig.java:1673)

at java.util.concurrent.Executors$RunnableAdapter.call(Executors.java:471)

... 4 more

Baidu later learned that the memory overflow problem, the following specific solutions:

Catalina.sh files in Ubuntu (path for the file is /usr/share/tomcat7/bin/catalina.sh), add the following content in the first line in the document:

JAVA_OPTS='-server -Xms256m -Xmx512m -XX:PermSize=128M -XX:MaxPermSize=256M' #Note: single quotation marks can not be omitted

Catalina.bat files in windows, in the first row, add the following content:

set JAVA_OPTS=-server -Xms256m -Xmx512m -XX:PermSize=128M -XX:MaxPermSize=256M #Note: no single quotation marks

(d)According to (c) in the modified after the restart the Tomcat server:

sudo /etc/init.d/tomcat7 restart

You can normal open electric page in the browser: localhost:8080/zk and background management page: localhost:8080/admin, to transfer Tomcat server also be accomplished.